Job

- Level

- Senior

- Job Feld

- Data, Back End

- Anstellung

- Vollzeit

- Vertragsart

- Unbefristetes Dienstverhältnis

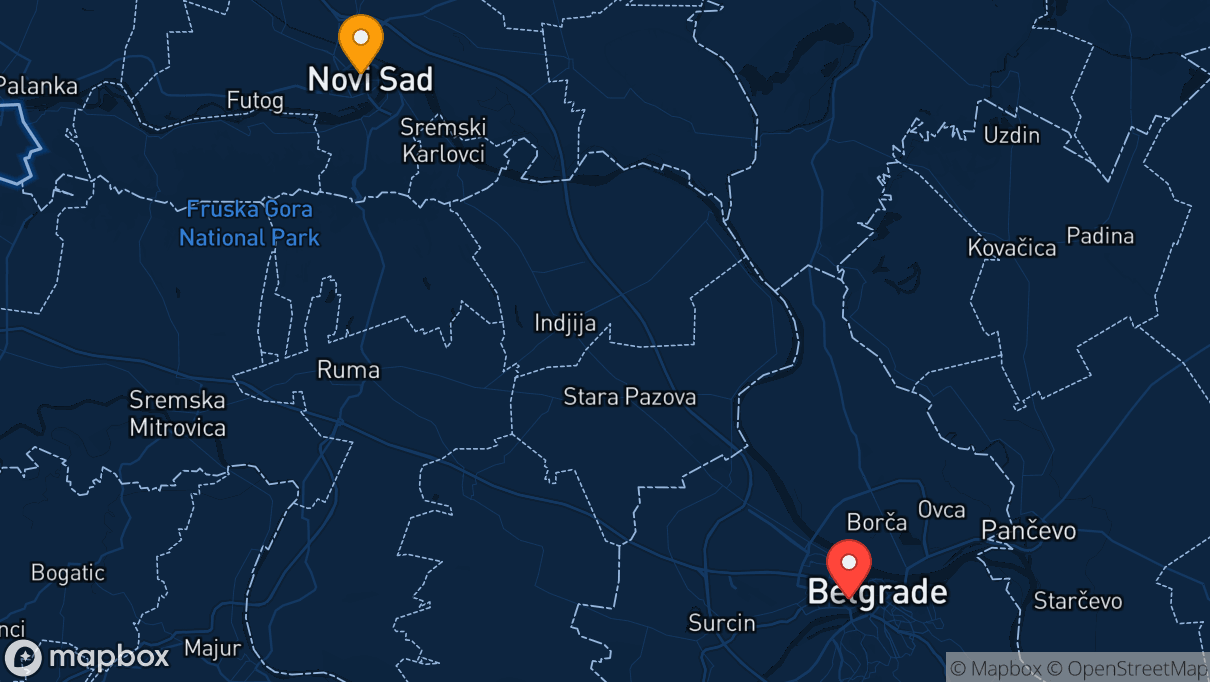

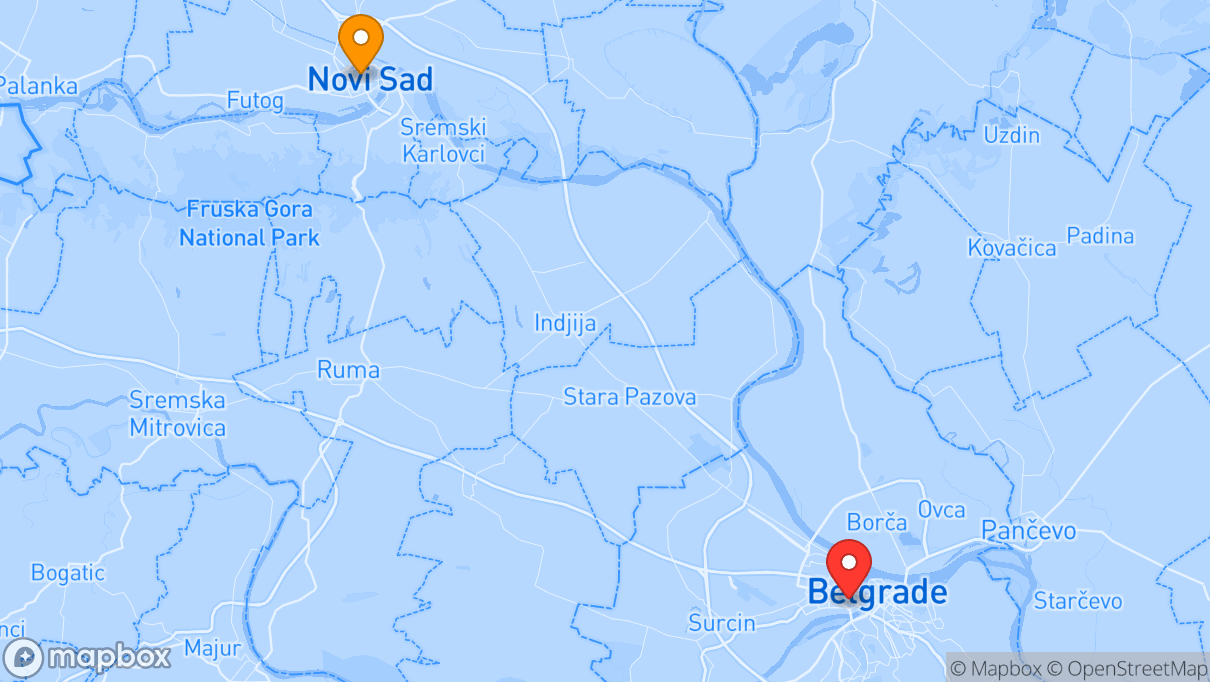

- Ort

- Belgrad, Novi Sad

- Arbeitsmodell

- Hybrid, Onsite

Job Zusammenfassung

In dieser Rolle entwickelst du leistungsstarke Datenpipelines und ETL/ELT-Prozesse mit Databricks und Google BigQuery, arbeitest an Cloud-Integrationen und förderst die Datenmodellierung im Team zur Lösung komplexer Datenherausforderungen.

Job Technologien

Deine Rolle im Team

As a Data Engineer, you'll play a key role in building modern, scalable, and high-performance data solutions for our clients.

You'll be part of our growing Data & AI team and work hands-on with leading data platforms, supporting clients in solving complex data challenges.

Your job's key responsibilities are:

- Designing, developing, and maintaining robust data pipelines using Databricks, Google BigQuery (Dataflow, Dataform or similar), Spark, and Python.

- Building efficient and scalable ETL/ELT processes to ingest, transform, and load data from various sources (databases, APIs, streaming platforms) into cloud-based data lakes and warehouses.

- Leveraging ecosystems such as Databricks (SQL, Delta Lake, Workflows, Unity Catalog) or Google BigQuery to deliver reliable and performant data workflows.

- Integrating with cloud services such as Azure, AWS, or GCP to enable secure, cost-effective data solutions.

- Contributing to data modeling and architecture decisions to ensure consistency, accessibility, and long-term maintainability of the data landscape.

- Ensuring data quality through validation processes and adherence to data governance policies.

- Collaborating with data scientists and analysts to understand data needs and deliver actionable solutions.

- Staying up to date with advancements in data engineering and cloud technologies to continuously improve our tools and approaches.

Unsere Erwartungen an dich

Qualifikationen

Essential Skills:

- Solid understanding of data warehousing principles, ETL/ELT processes, data modeling and techniques, and database systems.

- Excellent SQL proficiency for data querying, transformation, and analysis.

- Excellent communication and collaboration skills in English.

- Ability to work independently as well as part of a team in an agile environment.

Beneficial Skills:

- Understanding of data governance, data quality, and compliance best practices.

- Familiarity with DevOps, Infrastructure-as-Code (e.g., Terraform), and CI/CD pipelines.

Erfahrung

- 3+ years of hands-on experience as a Data Engineer working with either Databricks or Google BigQuery (or both), along with Apache Spark.

- Strong programming skills in Python, with experience in data manipulation libraries (e.g., PySpark, Spark SQL).

- Proven experience with at least one major cloud platform (Azure, AWS, or GCP).

- Experience with working in multi-cloud environments.

- Interest or experience with machine learning or AI technologies.

- Experience with data visualization tools such as Power BI or Looker.

Benefits

Mehr Netto

- 🚙Poolcar

- 💻Notebook zur Privatnutzung

- 🛍Mitarbeitervergünstigungen

- 🎁Mitarbeitergeschenke

- 📱Handy zur Privatnutzung

- 🚎Verkehrsmittel-Zuschuss

Gesundheit, Fitness & Fun

- 👨🏻🎓Paten- & Mentor- Programm

- ⚽️Tischkicker o. Ä.

- 🧠Psychische Gesundheitsv.

- 👩⚕️Betriebsarzt

- 🎳Team Events

- 🚲Fahrradabstellplatz

- 🙂Gesundheitsförderung

Work-Life-Integration

- 🕺No Dresscode

- 🧳Relocation Package

- 🅿️Mitarbeiterparkplatz

- 🐕Tiere willkommen

- 🏠Home Office

- ⏰Flexible Arbeitszeiten

- ⏸Bildungskarenz/Auszeit

- 🚌Gute Anbindung

Essen & Trinken

Themen mit denen du dich im Job beschäftigst

Job Standorte

Das ist dein Arbeitgeber

NETCONOMY

Graz, Madrid, Belgrade, Novi Sad, Pörtschach, Graz, Graz, Zürich, Wien, Dortmund, Amsterdam, Berlin

At NETCONOMY, we create digital platforms that help global brands become digital leaders. For more than 25 years, we have guided our customers through complex digital transformation journeys, designing intelligent solutions unlock productivity through data and Al and create future-ready business cores. Over 450 professionals across ten European locations make this work possible. What truly defines us is how we work together. Our teams collaborate across locations, with most teamwork happening seamlessly in a remote environment. At the same time, our office locations serve as hubs for in-person exchange and social connection, balanced with the flexibility to also work from home. Not only collaboration but also continuous learning is at the core of our culture. Therefore, we provide diverse development opportunities and evolving career paths across roles and disciplines, empowering our people to learn, develop, and shape their careers in an international environment. Ready to shape the digital future with us? Jump in!

Description

- Unternehmensgröße

- 250+ Employees

- Gründungsjahr

- 2000

- Sprachen

- Englisch

- Unternehmenstyp

- Digitale Agentur

- Arbeitsmodell

- Hybrid

- Branche

- Internet, IT, Telekom

Dev Reviews

by devworkplaces.com

Gesamt

(2 Bewertungen)Engineering

3.8Career Growth

3.7Workingconditions

4.2Culture

4.5